Table of contents

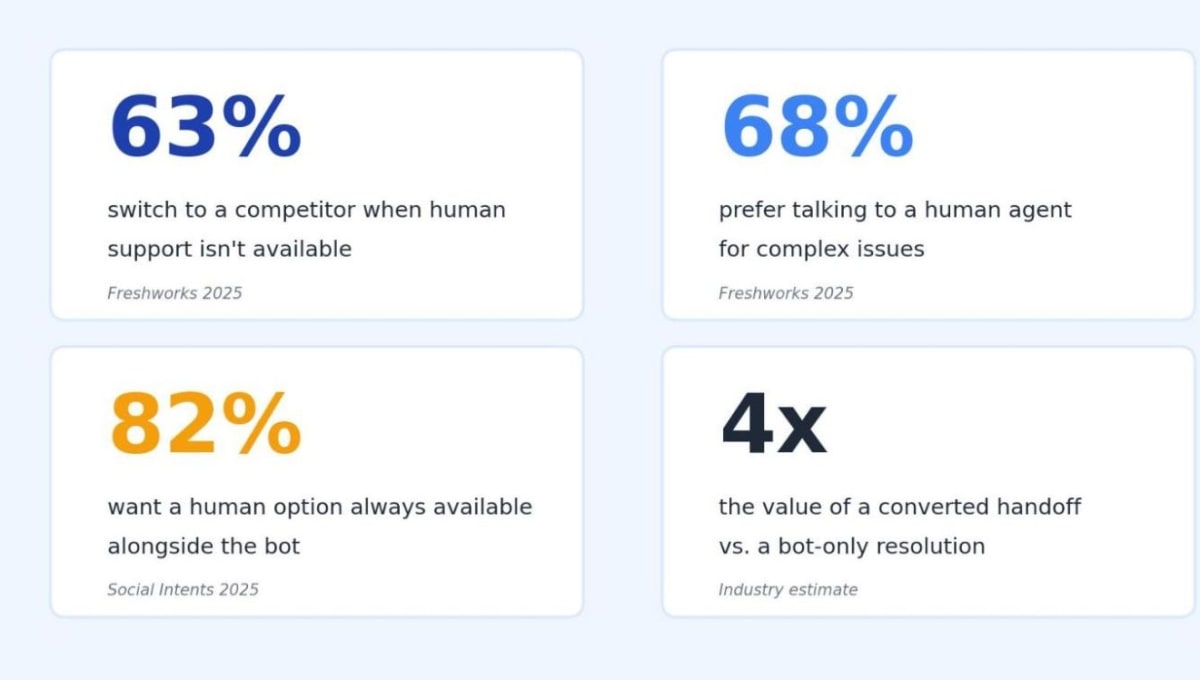

Research shows that the majority of customers will take their business elsewhere if human support isn’t available, even when an AI bot is handling the conversation well (Freshworks, 2025). Most stores build their bot and live chat as separate tools and never design the connection between them.

This post walks through the complete chatbot to human handoff process: when to escalate, how to design the transition, and the 5 metrics that prove your flow actually converts. You’ll get triggers, copy templates, and the technical setup most teams skip, all in one place.

What A Chatbot To Human Handoff Means for eCommerce

A chatbot-to-human handoff is the structured transition that moves a customer from an automated bot conversation to a live human agent without losing context, breaking flow, or making the customer repeat themselves. It happens when the bot recognizes a query is out of scope, the customer asks for a person, or sentiment signals frustration.

The handoff matters more than ever in eCommerce right now. AI chatbots can handle up to 80% of routine ecommerce queries automatically, but82% of consumers want a human option available for anything complex or sensitive. Live chat alone can’t keep up with volume, and a pure bot drops the customer the moment a question moves past the FAQ.

The hybrid model is what works. Bots take the high-volume routine work, humans take the high-value complex work, and the handoff is the bridge connecting them. Smartsupp’s analysis of5 billion website visits across 175,000 ecommerce accounts showed that stores combining bots with live agents convert at substantially higher rates than stores running either tool in isolation.

Done well, the customer barely notices the transition aside from the relief of getting real help. Done badly, the bridge is where the sale dies.

5 Failure Modes That Wreck Chatbot To Human Handoff Flows

Most teams handle the technical handoff fine, but underbuild the customer-facing experience around it. 5 failure modes show up across almost every store I’ve audited, and every one of them is fixable with conversation design rather than new technology.

1. The forced repeat. The bot asks for name, order number, and issue type. The agent picks up the conversation and asks for the name, order number, and issue type. The customer immediately disengages because the bot collected info that didn’t transfer.

2. The dead-end loop. The bot doesn’t know how to escalate, the “talk to a human” button isn’t visible, and the customer has to type “agent” 3 times before anything happens. By message 4, they’ve already opened a competitor’s site.

3. The cold transfer. The agent joins with no context, no transcript, and no read on what the customer was trying to do. The customer has to summarize the entire conversation from scratch.

4. The wrong agent. The bot routes a billing dispute to a generalist support rep instead of the dedicated billing team. The agent has to triage and re-route, adding 5 to 10 minutes to a query that was already frustrating.

5. The silent wait. No “an agent will be with you in 90 seconds” message, no queue position, no expected wait time. The customer sits in a blank chat box and assumes nothing’s happening.

Categories with regulatory or compliance complexity get hit hardest by these failures. Take functional plant-based products. A customer asking about THC content, drug-test interactions, or product legality in their state needs a human trained on the legal nuance.

A bot that answers incorrectly creates real liability. That’s exactly where herbal wellness stores likeQuiet Monk become a strong reference point for this category. In stores like this, a chatbot might confidently answer basic product questions, but the moment the conversation shifts into THC thresholds or legality by jurisdiction, the flow has to move to a trained human who understands compliance boundaries and product nuance.

In that setup, a failed handoff isn’t just a broken UX moment. It can turn into a compliance exposure where the customer receives unclear guidance on a regulated product category, and that gap compounds over time across repeated interactions.

The fix for all 5 failures is the same. Design the handoff before you build the bot, not after.

7 Tactics To Design A Chatbot To Human Handoff That Converts

These build on each other, so work them in order, even if tactic 5 looks more interesting on the surface.

1. Map Your Handoff Triggers Before You Build Anything

A chatbot to human handoff only works if the bot knows when to step aside. Triggers fall into 5 categories, and your bot needs explicit rules for each:

- Complexity triggers: queries that span multiple systems, custom orders, and refund disputes

- Sentiment triggers: frustration markers like “this is ridiculous,” “I want to speak to someone,” repeated rephrasing

- Keyword triggers: specific terms that flag immediate escalation (fraud, emergency, cancel)

- Time triggers: conversations exceeding 4 to 5 turns without resolution

- Customer-tier triggers: VIP, repeat buyers, high-LTV accounts that always get human routing

Write these out before you build a single bot flow. The teams I’ve watched succeed at this have built a 1-page trigger matrix as their first deliverable. Teams that fail skip this step and try to add triggers retroactively, which never works because the bot’s knowledge base wasn’t designed around them.

2. Set Keyword And Category Escalation Rules That Match Your Vertical

Different eCommerce verticals need different handoff thresholds. A standard apparel store can probably let the bot handle 90% of conversations, since most queries are sizing, shipping, and returns. A high-information vertical needs the opposite default.

Take nootropics and supplements. This is where chatbot confidence becomes a real liability if it’s misplaced. Customers aren’t asking surface-level questions. They’re asking things like “can I stack this with L-theanine and still stay under a safe daily limit,” or “does this interact with SSRIs,” or “where’s the third-party COA for this batch.”

That’s where Nootropics Depot becomes a sharp example of why tighter escalation rules matter. Their catalog goes deep into compounds like racetams, adaptogens, and standardized extracts. A customer browsing there moves quickly from product discovery into highly specific questions about dosage ranges, bioavailability, or lab testing.

Many of those answers depend on context that the bot simply doesn’t have, like the customer’s existing supplement stack, sensitivity levels, or medical background.

Now layer in something even more specific: third-party testing. A customer might ask for a certificate of analysis tied to a specific batch number. That’s not just an FAQ lookup. It requires pulling the right document, understanding what the markers mean, and sometimes clarifying how to read it. If a bot tries to answer that generically, it risks giving incomplete or misleading guidance.

In this kind of setup, the escalation logic has to kick in early. Not because the bot failed, but because the question itself crosses into territory where precision matters more than speed.

That’s why stores like Nootropics Depot benefit from keyword and category triggers that are far stricter than a typical eCommerce setup. Terms like “stack,” “interaction,” “dosage,” “side effects,” or “lab results” should immediately route to a trained human. Even if the bot technically has content related to those topics, the cost of being slightly wrong is too high.

The rule of thumb: if the wrong answer to a customer question can hurt them or expose you legally, the bot should escalate by default. If the right answer sits in your help docs and the question is unambiguous, the bot can handle it. Map your top 50 customer queries against this 2-question filter, and your trigger logic will mostly write itself.

⚠️ Common Mistake

Many stores set escalation rules around what the bot can’t answer. The better question is what the bot shouldn’t answer, even if it technically could. A bot trained on your help docs might confidently quote a return policy that’s been revised since, or recommend a dosage that’s now off-label.

3. Design The Wait Window So Customers Don’t Bounce

The wait between bot escalation and live agent pickup is where most handoffs lose the customer. 30 seconds without a message feels like 5 minutes when you’re stuck. The fix is active wait management.

Every handoff should fire 3 messages in sequence:

- Immediate confirmation: “I’m connecting you with [Agent Name].” Sets expectation, names the next person.

- Queue update: “Your estimated wait is 90 seconds.” Concrete number, not a vague “soon.”

- Engagement prompt: “While you wait, here’s [a relevant help doc, video, or order status check].” Keeps the customer doing something.

If the wait runs longer than 2 minutes, send a second update. The customer should feel something is happening every 60 to 90 seconds; never sit in a black hole.

4. Pass Full Context To The Agent In One Block

Cold transfers are the single biggest CSAT killer in handoff design. The agent should join the conversation with the entire transcript visible, plus a summary block at the top showing customer name, account tier, current issue, what the bot already tried, and any sentiment signals.

A clean context block looks like this:

- CUSTOMER: Sarah K. (VIP tier, 3 prior orders)

- ISSUE: Refund request, order #4521

- BOT TRIED: Verified order, offered store credit

- SENTIMENT: Mildly frustrated (mentioned “this is taking too long” once)

- SUGGESTED RESPONSE: Direct refund approval given VIP status

Agents trained to read this block before typing their first message resolve handoff conversations 30 to 50% faster than agents starting cold. The first message they send should reference the context: “Hi Sarah, I see you’re looking for a refund on order #4521. I can process that directly, given your account history.”

That single sentence tells the customer the agent is up to speed, which closes the loop on every fear they had during the wait.

5. Build The Technical Infrastructure That Connects Chat, CRM, And Help Desk

The handoff design lives across 3 systems: the chat platform, the CRM (customer history, order data, account tier), and the help desk (ticket tracking, agent assignment, SLA monitoring). Most stores have all 3 systems but no automation connecting them, which is why handoffs lose data on the way through.

The fix is workflow automation. Tools like Zapier and Make handle simple use cases. For more complex routing, where you’re connecting chat events to CRM updates, ticket creation, Slack notifications, and product data lookups in a single flow, custom workflow builds give you more control.

Teams without an internal automation engineer often work with an n8n expert partner to build the orchestration layer, since n8n handles the multi-step branching that simpler tools can’t. They build the orchestration logic that sits between them. For example, instead of a generic “create ticket on escalation” flow, they’ll structure it so that:

- A high-LTV customer triggers a priority ticket with SLA tagging

- A refund-related query pulls payment data before assignment

- A compliance-related keyword routes directly to a trained specialist queue

- The agent view is preloaded with order data, tags, and conversation summary

That level of control is hard to maintain inside tools like Zapier once the flow grows beyond a few steps. n8n handles deeper branching and conditional logic, but it also requires someone who knows how to structure those workflows cleanly so they don’t break under edge cases.

The infrastructure check: when the bot escalates, does the agent’s screen automatically populate with the order data, the chat transcript, the customer record, and a pre-filled ticket? If any of those steps require manual lookup, the handoff is leaking time and context. Fix the plumbing before you scale agents.

6. Train Agents To Acknowledge First, Solve Second

The agent’s opening message decides whether the customer relaxes or stays tense. Acknowledge before solving.

Bad opening: “How can I help?” The customer feels the bot was a waste and has to re-explain everything.

Good opening: “Hi Sarah, I see you’ve been working with our assistant on the refund for order #4521. I can process that for you right now.”

The first message should always use the customer’s name, reference the issue from the bot transcript, and state the next action clearly. Train agents on this with 5 to 10 example handoff conversations during onboarding. Most CS teams skip this and treat handoffs like fresh chats, which is why their post-handoff CSAT runs 15 to 20 points lower than their bot-only or live-only conversations.

7. Measure Handoff Outcomes Beyond Raw Volume

Most teams track how many handoffs happen. That tells you nothing about whether the handoffs are working. The metrics that matter come in pairs:

- Handoff trigger rate vs. handoff success rate: how often the bot escalates vs. how often the customer’s issue resolves after escalation

- Time to handoff vs. time in handoff: how fast the bot decides to escalate vs. how long the agent takes after pickup

- Pre-handoff sentiment vs. post-handoff sentiment: did the conversation get better after the agent joined, or worse

If your handoff success rate sits below 75% or your post-handoff CSAT falls below your live-chat-only CSAT, the handoff is breaking somewhere. Audit the failure modes from the previous section and fix the weakest one first.

📊 By The Numbers

A handoff conversation that converts is worth roughly 4x more than a bot-only resolution in most ecommerce categories. The agent typically closes a higher-value sale or saves a refund/cancellation from going through. Track the dollar value, not just the count.

Chatbot To Human Handoff Vs Pure Bot Or Pure Live Chat

Most stores get stuck choosing between a pure bot deployment and a pure live chat operation. Both have failure modes that the hybrid handoff model solves directly.

Approach | Strength | Weakness | Best For |

Pure bot | Handles volume, low cost, 24/7 | Drops complex queries, no judgment | Simple FAQ, low-AOV stores |

Pure live chat | High empathy, complex resolution | Doesn't scale, expensive, hours-limited | High-AOV, low-volume stores |

Hybrid handoff | Volume + complexity + 24/7 | Requires design and infrastructure | Most eCommerce stores at scale |

The hybrid model only works if the handoff is built well. A poorly designed handoff is worse than a pure bot because it adds latency and frustration on top of the bot’s existing limitations. Done right,combining chatbots and live chat in one designed flow is what makes the model deliver on both volume and quality.

Smaller stores should run the pure bot or pure live chat model until they hit the volume threshold where one or the other starts breaking. Most teams hit that threshold around 200 to 400 inbound chats per week, which is when the hybrid handoff becomes both necessary and economically worth the design investment.

Your 30-Day Chatbot To Human Handoff Sprint

You don’t need a 6-month transformation project to fix this. A focused 30-day sprint can take a store from broken handoffs to converting handoffs.

Week 1: Audit and map. Pull transcripts from your last 200 chatbot conversations. Tag every conversation that resulted in a handoff. Note which failure modes (forced repeat, dead-end loop, cold transfer, wrong agent, silent wait) showed up most often. Build your trigger matrix from the patterns you find.

Benchmark for end of week 1: a 1-page document showing your top 5 handoff triggers, your top 5 failure modes by frequency, and your current handoff success rate.

Common trap: relying on aggregate analytics instead of reading actual transcripts. The patterns hide in conversation flow, never in a metric dashboard.

Week 2: Restructure the bot. Add explicit handoff triggers based on your week 1 audit. Add the wait window message sequence (3 messages: confirmation, queue update, engagement prompt). Build the context block template that fires every time a handoff happens.

Benchmark for end of week 2: bot now hands off based on triggers (not just direct user request), and every handoff fires the 3-message wait window automatically.

Common trap: trying to handle every edge case in week 2. Cover the top 5 trigger types first, then iterate.

Week 3: Connect the systems. Wire up the bot to the CRM and help desk so context passes automatically. Pre-fill the agent’s context block. Set up routing rules so handoffs go to the right team based on issue type.

Benchmark for end of week 3: agents pick up handoffs with zero manual lookup. Customer name, order data, transcript, and suggested action all auto-populate.

Common trap: scope creep on the integration build. Start with chat-to-CRM, add ticketing later if needed.

Week 4: Train and measure. Run agent training on the new handoff flow with 5 to 10 example conversations. Set up dashboards tracking the metric pairs from tactic 7. Run a weekly review where the team looks at 5 handoff transcripts together and tags what worked or didn’t.

Benchmark for end of week 4: agents are using the acknowledge-first opening template, and the weekly review is documented and on the calendar.

Common trap: skipping the agent training because “they’ll figure it out.” Untrained agents revert to cold-transfer behavior within 2 weeks, which makes the entire infrastructure investment wasted.

💡 Pro Tip

Don’t try to fix all 5 failure modes at once. Pick the 1 that hurts your CSAT most and ship the fix in week 2. Use weeks 3 and 4 for the next 2 failure modes. The remaining ones can wait until month 2. Compounding small wins beats trying to perfect the whole system in 30 days.

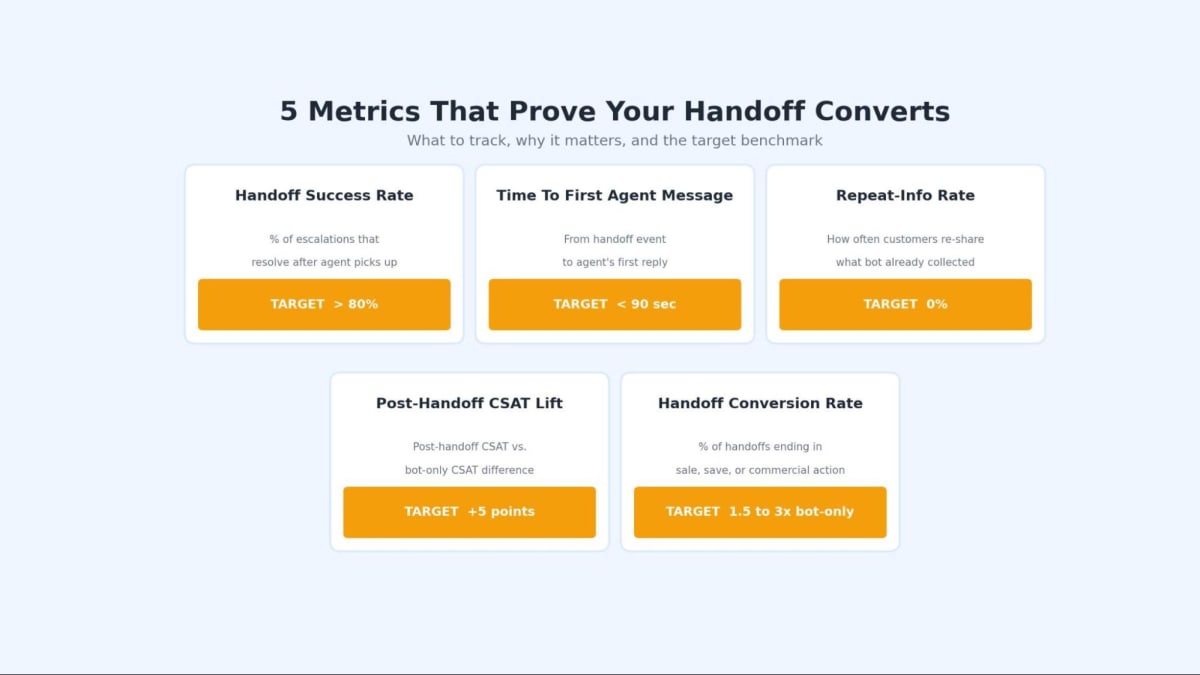

5 Metrics That Prove Your Chatbot To Human Handoff Converts

Vanity metrics like “total handoffs” tell you nothing. These 5 actually show whether your handoff flow is working.

1. Handoff success rate. The percentage of escalations where the customer’s issue resolves after the agent picks up. Target above 80%. Anything below 75% means the bot is escalating prematurely or the agent isn’t equipped to close the loop.

2. Time to first agent message. From the moment the bot fires the handoff event to the moment the agent sends their first response. Target under 90 seconds. Customers tolerate longer waits when you’re sending wait window updates, but the first agent message should always come within the promised window.

3. Repeat-info rate. How often do customers have to repeat information that the bot has already collected? Pull this from transcripts manually for the first month, then automate the check by comparing bot-collected fields against agent-asked questions. Target zero. Even a 5% repeat-info rate kills CSAT.

4. Post-handoff CSAT vs. bot-only CSAT. If customers handed off to humans rate their experience worse than customers who only talked to the bot, your handoff is broken. Post-handoff CSAT should run at least 5 points higher than bot-only, since by definition, handoff conversations are the high-stakes ones where humans add the most value.

5. Conversion rate of handoff conversations. This is the metric most teams forget. What percentage of handoff conversations end in a sale, refund, retention, or other commercial action? Track this as a separate cohort from your overall chat conversion rate. A well-designed handoff should convert at 1.5 to 3x your bot-only conversion rate, because the customers escalating are usually high-intent buyers stuck on a friction point.

🎯 Pro Insight

The 5th metric is the one that gets you budget approval for the next iteration. CFOs care about conversion rate. CSAT and response time live in the support team’s world. If you can show that handoff conversations convert at 2x bot-only, you’ve made the business case for investing more in handoff design.

Design Your Chatbot To Human Handoff Before The Bot Itself

The chatbot to human handoff is where customer experience either compounds or collapses, and most eCommerce stores design the bot first and the handoff later. Reverse that order. Build the trigger matrix, the wait window, and the context block before you write a single bot flow, and yourcustomer experience strategy will compound from there.